A new ground-breaking feature in SQL Server 2016 allows report user context to be passed through shared data sources, enabling role-based, row-level security. This feature doesn’t require complex administration configurations, service principal delegation, Kerberos, Claims/Windows Token conversion or impersonation. You may not be as excited as I am about this unless you’ve also lived through the tedious configuration process we’ve had to endure for many years until recently.

A little over a week ago I heard from my friend and past co-author, Robert Bruckner – a Senior Architect and Development Lead on the Reporting Services product team. He told me I needed to check out a new capability they had just enabled in SQL Server 2016 that would make it much easier then before to implement row-level security for SQL Server Analysis Services. I had to check it out for myself and was please to discover that this technique works with Reporting Services mobile and paginated reports. It also works in a variety of server architectures. I tested it in a domain environment and on a simple workgroup server. It will work in scaled-out scenarios, and with or without SharePoint in the mix.

Until now, there were two major blocking points to authenticate report users on the SSAS server so data could be restricted or filtered for each user. Kay Unkroth talks about the prior authentication landscape in his post to the SQL Server Reporting Services Team Blog titled Passing user names to Analysis Services with personalized connection strings in SQL Server 2016 Reporting Services. He describes the challenges in more detail and I’ll summarize from my experience.

1. The first challenge is that user context gets lost as database connections are passed from one service to another. Before we get into the weeds with this story, let’s just categorize this as complicated and confusing. It raises high-visibility questions and concerns that necessitate handing-off to support and administrative folks to take care of settings and components outside the environments you manage – namely the database and report servers.

Not long ago when we tried to use SharePoint as the platform for all BI and reporting systems; getting connection and user information to pass through through all the layers in the server architecture was more like running a relay race… a user logged into SharePoint and navigated to a report. At the point, the credential object for the user was exchanged for a Claims Token. The user who chose to run the report and the Claims Token had to be delegated to a Windows service principal name (SPN), which, in-turn was delegated to the service account running a specific service on the Windows server. It was then up to the service to interface with your database in Analysis Services. Without getting into more details; the service account was not the user who had access to certain important data. For the service account to connect to the database at all, it needed to have either admin privileges – a potential security risk – which is now being handled by your system or database administrators (who probably know very little about your reporting solution, your users’ need or business objectives. They just want to make sure it has no security holes and will lock-down anything suspicious or unfamiliar.

2. The second challenge is that Reporting Services requires shared data sources with stored user credentials, in order to use a lot of it’s advanced features like shared datasets (under some conditions), data-driven subscriptions, caching, history or snapshots. In every case, the credentials of the user actually running the report get exchanged for the service proxy account and the original user context is lost. Analysis Services sees the same user connect for every report request. A security genius might conclude that these are all issues that can be handled through some security configuration mechanism – and they would be right. The problem is that they often don’t get handled because of the complexity or the simple fact that management falls within another team’s realm of responsibility.

Over the many years that Reporting Services has been a leading reporting tool in the industry, practitioners have asked if they could somehow add an expression to a shared data source definition that would inject the username into the connection string so it could be deciphered in the server. Interesting idea with a lot of reasons that it wouldn’t happen – – sort of.

Using conventional approaches, where principal delegation was configured and used correctly – which may or may not involved the Kerberos protocol and NTLM Authentication.

The New And Improved Technique: Using the CUSTOMDATA Property

Drumroll, please… Here’s the big change that allows new, cool things to happen.

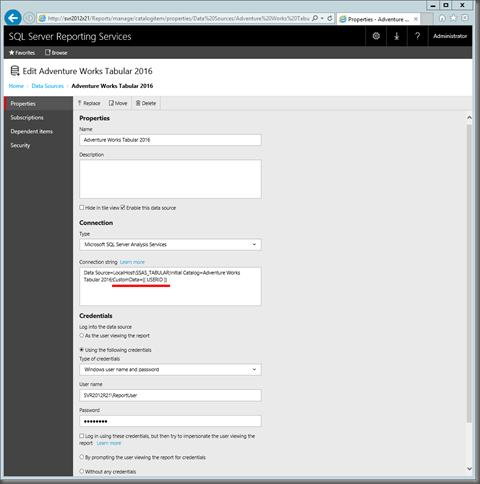

In every data source connection string, you can add a simple expression that maps the current Windows username to the CUSTOMDATA property of the data source provider. This works in SSRS embedded data sources, shared data sources, in a SharePoint Office Data Connecter (ODC) file and in a SharePoint BISM connection file. In each case, the syntax should be the similar. Here is my shared data source on the SSRS 2016 report server:

The connection string modification is simple. Just add this text to the end, following a semicolon:

;CUSTOMDATA = {{ USERID }}

This value travels through the connection stack when ever a connection is open on the database server. Since the CUSTOMDATA connection property value is assessable and exposed by query and expression languages and APIs on the server, code can be written to respond to the user. In the case, we will filter queries with records and data that only the current user should see.

Windows and Report Users

Windows users, whether added to Active Directory, whether on domain-joined computers or on a simple workgroup server, are standard Windows users and need not be added to the Local or Domain Administrators group. Any SSAS users with restricted data access should also not be added to any Analysis Services administrator role.

As you can see, Paul and Fred are standard Windows users with limited access to elevated resources.

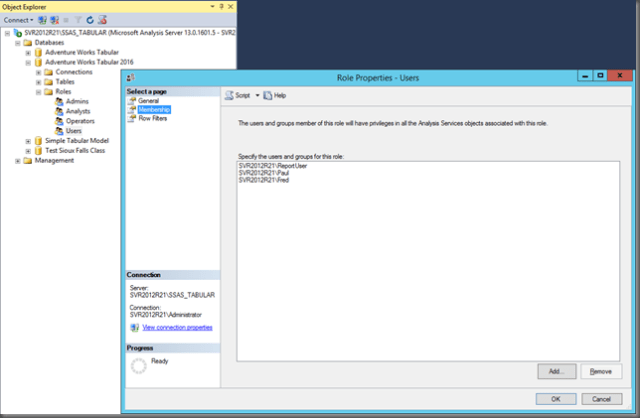

Analysis Service Roles and Membership

I’ve tested this technique with both SSAS multidimensional and Tabular, with the same results. I’ll show you the set-up on a named instanced on my server, SVR201R21, named SSAS_Tabular. The SSAS Security properties page for the instance show that only the Administrator local admin user and the SSAS service accounts. This is important to check because if you accidentally add a user as an administrator, row filtering won’t work. Testing and debugging solutions on a single development machine requires a lot of logging out and logging back in to test under different user conditions.

My SSAS Tabular database is named Adventure Works Tabular 2016. Under the database Roles, properties for the Users role, you see that there are three users; Fred, Paul and ReportUser. The ReportUser is our proxy users. When we need to setup shared a data source with saved credentials, I supply the user name and password for the ReporrUser account.

It’s important to note that the ReportUser must have Read access to the database in order to connect and execute queries. But, since that same account may be used to connect on behalf of others users, the ReportUser account will have restricted access to data through the security bridge tables that are implemented by the role’s row-level filtering expressions.

Row-level Filtering Bridge Table

The core approach for row-level, user-specific filtering is the same as always but the programmatic implementation is only slightly different. This is the essential foundation of that feature. The following table image demonstrates tow things. One is that the table itself can be completely generated using DAX. This is a calculated table (one of many new cool features added in SQL Server 2016). I’ve just used inline ROW functions to added each row to the UNION function that defines the entire table.

In production, you may want to store this lookup data in a SQL Server table for easy maintenance, and then process it into the model as you would all you tables.

For each row, the UserName is an exact match for the value passed in from the UsersName token in the connection string, which will be presented by the CUSTOMDATA DAX function. Do you see how this will work? For testing and debugging, you can add a couple of measures useful for know who the current user is and what the CUSTOMDATA function value returns.

User Name:=USERNAME()

Connected User:=CUSTOMDATA()

In a Reporting Services report, you can add these measures to your query and then add the dataset fields to textboxes in your report.

Implementing a Role-based Row-level Filter

Start by opening the Roles window…

Checking the user membership of the Users role; ReportUser must be a member if the report server or client tool is connected as ReportUser. Even though no records are mapped to this user in the security bridge table, it has served it’s purpose by getting us connected to the database.

Paul and Fred are in the role in case they connect on their own, like from Excel or from SSRS using a data source without stored credentials.

This demonstration scenario calls for filtering all the data related to the Sales Territories for user who have permission to see it, according to the Country/User mapping in the bridge table. If the data related to other business entities should have user-specific filtering, you would follow the same pattern and multiple filters will be in effect.

The filter on the Sales Territory table is a DAX expression. You can use other functions to provide additional logic but this is a good example that demonstrates the essential pattern. As each row is scanned during a query, the expression will determine if there is a match. If so, the row is returned. If there is no match, the row is ignored and not returned in the query results.

Here’s the filter expression with e brief explanation:

=’Sales Territory'[Sales Territory Country]

= LOOKUPVALUE(‘Security Bridge Country'[Country],

‘Security Bridge Country'[Country], ‘Sales Territory'[Sales Territory Country],

‘Security Bridge Country'[UserName], CUSTOMDATA()

)

(again, each row in the table is considered in turn)…

Does the current row’s [Sales Territory Country] field value match the value of…

the result of the LOOKUPVALUE function which looks in the Security Bridge Country table for a Country matching the current Sales Territory[Country] AND where the User filed value on the same row matches the value returned by the CUSTOMDATA function.

Let’s try it out…

My Reporting Services report uses a data source where I have added the text ;CUSTOMDATA = {{ USERID }} to the end of the connection string.

The dataset is a simple MDX query that returns the Sales Territory Country, the Connected User test measure and the Reseller Total Sales measure. Notice that when the query runs locally in the designer, the CUSTOMDATA function returned the literal text in the parameter token. This is expected.

Here’s the report design. I’ve added the Connected User measure so you can see what the CUSTOMDATA function returns, along with the filtered results.

Run as the Administrator user, only the Country row for the corresponding row in the Security Bridge table is returned.

Let’s test the report as a real user.

I’ll sign-out as Administrator and then sign-in as Paul…

Running the deployed report in SSRS web portal this time, only the Country records are returned for this records that were mapped to my user name in the Security Bridge Country table. Furthermore, if I were to run a query that didn’t explicitly use the Sales Territory Country, only the records related to the countries in my filter scope would contribute to any totals due to the relationships in the model.

As I mentioned earlier, I also tested this in a domain environment and it also worked exactly as expected.

This means that the next time I’m called on to implement a row-level security solution, I won’t have to keep the system administrator up all night setting up complicated service principal names and configuring authentication delegation. If I have rights to setup the SSAS server and the data sources on the report server, I can now do all the configuration work myself – and that is pretty exciting.

Thanks again to Robert Bruckner for bringing this to my attention, and to Kay Unkroth for laying out the basics in his post. And a big big thanks to the Reporting Services product team for making making this a reality and saving the next generation of BI solution practitioners from reliving the challenges of the past.

Hi All,

I have developed an SSRS report from SSAS cube which already have roles and members defined and then deployed on POWER BI server. After deployment i have added customdata property to the data source. But i am not able to see particular clients data. Is there anything that i need to change in SSRS report. Please suggest.

Thank You.

Hi Paul,

I am actually trying to do this exact thing in SSRS and I’m not sure, based on your article, how to implement this. I see that I can add the CustomData string to the data source connection string, but how would i then use that to connect to my bridge table that contains the UserIDs? Can you elaborate?

Thanks,

Jeff

Hi Jeff,

Did you get your answer here? Actually i am not getting how to implement this from this article. I just understood to define the custom data property in the data source but how to pass the user ID from SSRS report or in SSRs report i am not getting.

Please let me know if you get any information regarding this. You can share if any helpful link you have.

Thank You.

Hi Aashu,

I was able to work around this by storing user credentials in a SQL table, and joining my source queries for the parameter selections to that table. I then just added custom code to the report to extract the username of the user running the report and passed it to the data sets as a parameter value, which filtered the parameter selection.

Hi,

The role DAX command won’t work if we use the Tabular model in both Excel and SSRS. I coded the following and still can’t seem to get it to work properly.

=IF (

CUSTOMDATA () = “”,

GLPlant[GLPlantID]=LOOKUPVALUE(GLPlantSecurity[GLPlantID],GLPlantSecurity[Username],USERNAME(), GLPlantSecurity[GLPlantID],GLPlant[GLPlantID]),

GLPlant[GLPlantID]=LOOKUPVALUE(GLPlantSecurity[GLPlantID],GLPlantSecurity[Username],CUSTOMDATA(), GLPlantSecurity[GLPlantID],GLPlant[GLPlantID])

)

Any suggestions appreciated.

Regards,

Ferris

Thanks Paul,

Your steps of using CUSTOMDATA is awesome. After implementing custom authentication and Row Level Security in 2016 cube I was really stuck to wire these two.

Still we require to provide one windows account to connect to cube, Is there any possibility to avoid this?

Thanks,

Kannan Kandasamy

Hi Paul,

really interesting article, my colleague and I went through the pain of setting up Kerberos in the past so this is great news!

We have followed through your example, but in order to get it to work we had to check “log in using these credentials, but then try to impersonate the user viewing the report” when setting up our data source to analysis services (which In your screen shot above is not ticked).

In ticking this though, we then cannot setup a cache plan against that data source. Leaving it as “always run this dataset with the most recent data” doesn’t work either, the value doesn’t ever change even after reprocessing the cube. We understand why it doesn’t work as it doesn’t know the list of users that would connect to be able to store a cache plan for each, but we seem to be stuck with either not being able to retrieve an up to date value of a KPI or not being able to only return the part of the KPI that is relevant to the logged on user.

We don’t know if the “always run this dataset with the most recent data” not working is a bug to be fixed or just a “feature”, but do you have any thoughts on how we may be able to achieve our goal?

Many thanks in advance

Lee.